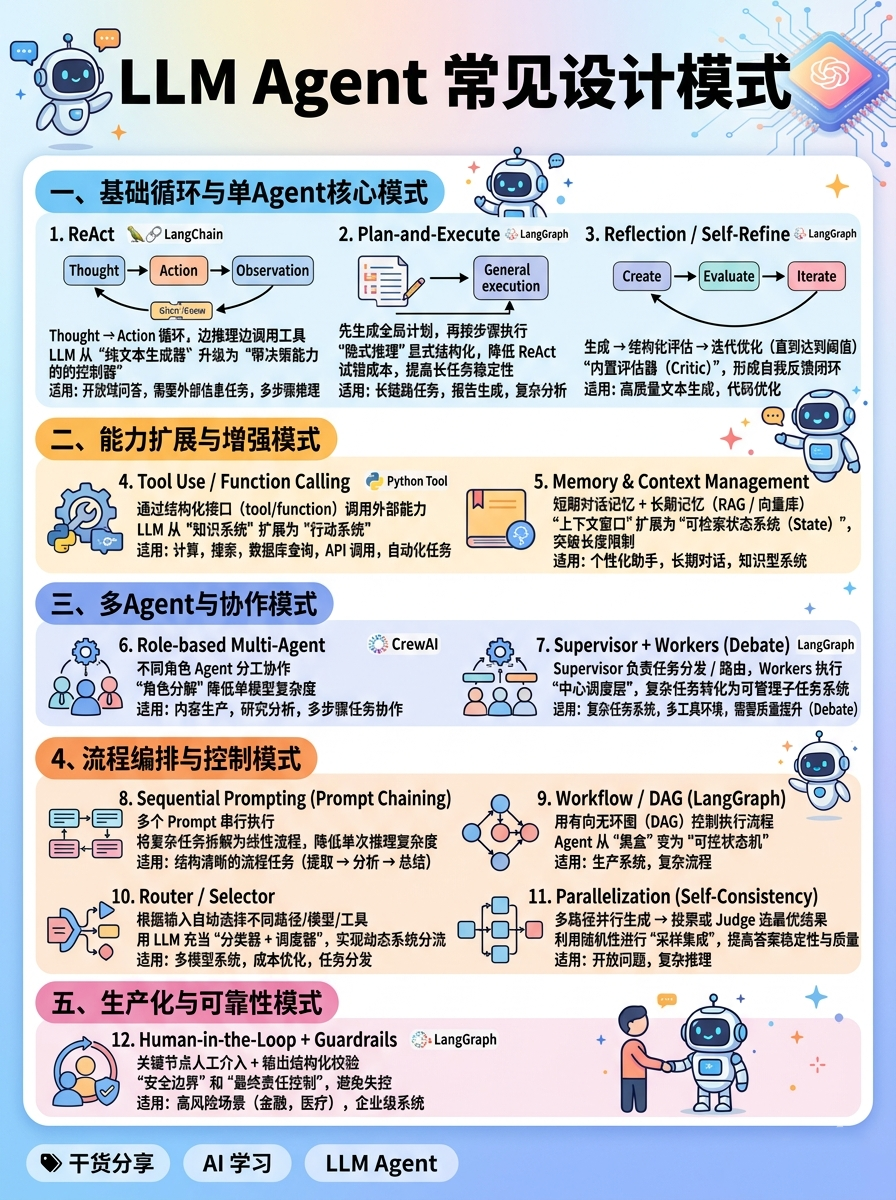

核心:Thought → Action → Observation 循环,边推理边调用工具

本质:将 LLM 从“纯文本生成器”升级为“带决策能力的控制器”,在推理过程中动态决定是否调用外部能力

适用:开放域问答、需要外部信息的任务、多步骤推理(搜索、查询、工具调用)

from langchain.agents import create_agentfrom langchain_openai import ChatOpenAIfrom langchain_community.tools import DuckDuckGoSearchRunllm = ChatOpenAI(temperature=0)tools = [DuckDuckGoSearchRun()]# 通用 Agent 构建方式(内部可能使用 ReAct / tool calling 等机制)agent = create_agent(llm, tools)result = agent.invoke({"messages": [{"role": "user", "content": "今天深圳天气怎么样?"}]})print(result["messages"][-1].content)

2. Plan-and-Execute核心:先生成全局计划(Plan),再按步骤执行(Execute)

本质:将“隐式推理”显式结构化,降低 ReAct 的试错成本,提高长任务稳定性

适用:长链路任务(报告生成、复杂分析、项目拆解、多步骤执行)

from langgraph.graph import StateGraph, ENDfrom langchain_openai import ChatOpenAIllm = ChatOpenAI(temperature=0)def planner(state):plan = llm.invoke("请为以下任务拆解成3-5步执行计划:生成一份AI趋势报告").content.split("\n")return {"plan": plan}def executor(state):results = [f"已完成: {step}" for step in state["plan"]]return {"results": results}graph = StateGraph(dict)graph.add_node("plan", planner)graph.add_node("execute", executor)graph.set_entry_point("plan")graph.add_edge("plan", "execute")graph.add_edge("execute", END)app = graph.compile()print(app.invoke({}))

3. Reflection / Self-Refine核心:生成 → 结构化评估 → 迭代优化(直到达到阈值)

本质:引入“内置评估器(Critic)”,让模型形成自我反馈闭环(类似梯度下降的离散版本)

适用:高质量文本生成、复杂推理、代码优化、需要持续改进的任务

from langchain_openai import ChatOpenAIfrom pydantic import BaseModel, Fieldllm = ChatOpenAI(temperature=0)class Review(BaseModel):score: int = Field(..., description="1-10分")feedback: str = Field(..., description="改进建议")structured_llm = llm.with_structured_output(Review)def reflect_loop(task: str, max_iters: int = 5, threshold: int = 8):draft = llm.invoke(f"完成任务:{task}").contentfor i in range(max_iters):review: Review = structured_llm.invoke(f"请严格打分并给出改进建议:\n{draft}")if review.score >= threshold:return draftdraft = llm.invoke(f"根据以下评估严格改进:\n评估:{review.feedback}\n原内容:{draft}").contentreturn draftresult = reflect_loop("写一段高质量的AI Agent介绍")print("\n最终结果:\n", result)

二、能力扩展与增强模式(Augmentation Patterns)4. Tool Use / Function Calling核心:通过结构化接口(tool/function)调用外部能力

本质:将 LLM 从“知识系统”扩展为“行动系统”,实现与现实世界/API 的连接

适用:计算、搜索、数据库查询、API调用、自动化任务

from langchain_openai import ChatOpenAIfrom langchain_core.tools import tool@tooldef multiply(a: int, b: int) -> int:"""Multiply two numbers."""return a * bllm = ChatOpenAI(temperature=0)# 绑定工具 → LLM 会输出 tool_callsllm_with_tools = llm.bind_tools([multiply])response = llm_with_tools.invoke("计算 123 * 456")# 实际生产中需解析 tool_calls 并执行print(response.tool_calls) # [{'name': 'multiply', 'args': {'a': 123, 'b': 456}, ...}]# 然后手动/通过 executor 执行工具

5. Memory & Context Management核心:短期对话记忆 + 长期记忆(RAG / 向量库)

本质:将“上下文窗口”扩展为“可检索状态系统(State)”,突破上下文长度限制

适用:个性化助手、长期对话、知识型系统、企业知识库

from langchain_openai import ChatOpenAIfrom langchain_core.prompts import ChatPromptTemplatefrom langchain_core.runnables import RunnableWithMessageHistoryfrom langchain.memory import ChatMessageHistoryllm = ChatOpenAI(temperature=0)store = {}def get_session_history(session_id):if session_id not in store:store[session_id] = ChatMessageHistory()return store[session_id]prompt = ChatPromptTemplate.from_messages([("system", "你是一个有记忆的AI助手。"),("placeholder", "{chat_history}"),("human", "{input}")])chain = prompt | llmchain_with_memory = RunnableWithMessageHistory(chain,get_session_history,input_messages_key="input",history_messages_key="chat_history")

三、多Agent与协作模式(Multi-Agent Patterns)6. Role-based Multi-Agent核心:不同角色 Agent 分工协作(Researcher / Writer / Analyst)

本质:通过“角色分解”降低单模型复杂度,提高整体系统能力上限

适用:内容生产、研究分析、多步骤任务协作

from crewai import Agent, Task, Crewfrom langchain_openai import ChatOpenAIllm = ChatOpenAI(model="gpt-4o-mini", temperature=0.2)researcher = Agent(role="研究员",goal="收集最新AI趋势",backstory="你是最专业的AI研究专家",llm=llm)writer = Agent(role="撰稿人",goal="写成高质量报告",backstory="你擅长把复杂内容写得通俗易懂",llm=llm)task1 = Task(description="调研2026年AI趋势", agent=researcher)task2 = Task(description="基于调研结果写一篇完整报告", agent=writer, context=[task1])crew = Crew(agents=[researcher, writer], tasks=[task1, task2], verbose=2)print(crew.kickoff())

7. Supervisor + Workers(含Debate模式)核心:Supervisor 负责任务分发 / 路由,Workers 执行(可并行或对辩)

本质:引入“中心调度层”,将复杂任务转化为可管理的子任务系统(类似操作系统调度)

适用:复杂任务系统、多工具环境、多Agent协作、需要质量提升(Debate)

from langgraph.graph import StateGraph, ENDfrom langchain_openai import ChatOpenAIllm = ChatOpenAI(temperature=0.7)def worker(state):result = llm.invoke(f"完成任务:{state['task']}").contentreturn {"worker_output": result}def critic(state):feedback = llm.invoke(f"请评估以下结果质量:\n{state['worker_output']}").contentreturn {"critic_feedback": feedback}def supervisor(state):decision = llm.invoke(f"""任务:{state['task']}当前结果:{state.get('worker_output')}评估:{state.get('critic_feedback')}请选择下一步:worker / debate / end""").content.strip().lower()if "worker" in decision:return {"route": "worker"}elif "debate" in decision:return {"route": "debate"}else:return {"route": "end"}def debate(state):improved = llm.invoke(f"根据以下反馈优化答案:{state['critic_feedback']}").contentreturn {"worker_output": improved}builder = StateGraph(dict)builder.add_node("worker", worker)builder.add_node("critic", critic)builder.add_node("supervisor", supervisor)builder.add_node("debate", debate)builder.set_entry_point("worker")builder.add_edge("worker", "critic")builder.add_edge("critic", "supervisor")builder.add_conditional_edges("supervisor",lambda state: state["route"],{"worker": "worker","debate": "debate","end": END})builder.add_edge("debate", "critic")graph = builder.compile()

四、流程编排与控制模式(Orchestration Patterns)8. Sequential Prompting(Prompt Chaining)核心:多个 Prompt 串行执行(一步输出作为下一步输入)

本质:将复杂任务拆解为线性流程,降低单次推理复杂度

适用:结构清晰的流程任务(提取 → 分析 → 总结)

from langchain_openai import ChatOpenAIfrom langchain_core.prompts import ChatPromptTemplatefrom langchain_core.runnables import RunnablePassthroughllm = ChatOpenAI(temperature=0)chain = (ChatPromptTemplate.from_template("提取关键词:{text}")| llm| (lambda x: x.content)| ChatPromptTemplate.from_template("基于关键词扩展信息:{text}")| llm| (lambda x: x.content)| ChatPromptTemplate.from_template("总结最终内容:{text}")| llm)result = chain.invoke({"text": "2026世界杯筹备进展"})print(result.content)

9. Workflow / DAG(LangGraph)核心:用有向无环图(DAG)控制执行流程

本质:将 Agent 从“黑盒”变为“可控状态机”,实现确定性流程 + 可观测性

适用:生产系统、复杂流程、需要可控性和可调试性的场景

from langgraph.graph import StateGraph, ENDdef extract(state): return {"data": "提取完成"}def analyze(state): return {"risk": "低"}def decide(state): return {"decision": "通过" if state["risk"] == "低" else "拒绝"}g = StateGraph(dict)g.add_node("extract", extract)g.add_node("analyze", analyze)g.add_node("decide", decide)g.set_entry_point("extract")g.add_edge("extract", "analyze")g.add_edge("analyze", "decide")g.add_edge("decide", END)print(g.compile().invoke({}))

10. Router / Selector核心:根据输入自动选择不同路径/模型/工具

本质:用 LLM 充当“分类器 + 调度器”,实现动态系统分流

适用:多模型系统、成本优化、任务分发(代码/通用/搜索)

from langgraph.graph import StateGraph, ENDfrom langchain_openai import ChatOpenAIllm = ChatOpenAI(temperature=0)# ----------------------# 不同处理节点# ----------------------def code_agent(state):result = llm.invoke(f"用Python解决这个问题:{state['input']}").contentreturn {"output": result}def general_agent(state):result = llm.invoke(f"回答这个问题:{state['input']}").contentreturn {"output": result}# ----------------------# Router(LLM分类器)# ----------------------def router(state):decision = llm.invoke(f"""判断以下问题属于哪一类:- code(编程/技术问题)- general(普通问题)只输出 code 或 general:{state['input']}""").content.strip().lower()return {"route": decision}# ----------------------# 构建 Graph# ----------------------builder = StateGraph(dict)builder.add_node("router", router)builder.add_node("code_agent", code_agent)builder.add_node("general_agent", general_agent)builder.set_entry_point("router")# 条件路由(核心)builder.add_conditional_edges("router",lambda state: state["route"],{"code": "code_agent","general": "general_agent"})builder.add_edge("code_agent", END)builder.add_edge("general_agent", END)graph = builder.compile()# ----------------------# 执行# ----------------------print(graph.invoke({"input": "写一个Python快速排序"}))print(graph.invoke({"input": "AI Agent是什么?"}))

11. Parallelization(并行化 + Self-Consistency)核心:多路径并行生成 → 投票或 Judge 选最优结果

本质:利用随机性(temperature)进行“采样集成”,提高答案稳定性与质量

适用:开放问题、复杂推理、需要高可靠输出的场景

from langchain_openai import ChatOpenAIfrom langchain_core.runnables import RunnableParallel, RunnableLambdallm = ChatOpenAI(temperature=0.7) # 提高温度生成多样性parallel = RunnableParallel({"v1": llm,"v2": llm,"v3": llm})def judge(results):answers = [v.content for v in results.values()]prompt = f"从以下答案中选出最好的,并说明原因:{answers}"return llm.invoke(prompt).contentchain = parallel | RunnableLambda(judge)print(chain.invoke("解释什么是AI Agent"))

五、生产化与可靠性模式(Production & Reliability)← 2026新增重点12. Human-in-the-Loop + Guardrails核心:关键节点人工介入 + 输出结构化校验

本质:在自动化系统中引入“安全边界”和“最终责任控制”,避免失控

适用:高风险场景(金融、医疗、内容发布)、企业级系统、自动化决策流程

from langgraph.graph import StateGraph, ENDfrom langgraph.checkpoint.memory import MemorySaverfrom langchain_openai import ChatOpenAIfrom pydantic import BaseModel, Fieldllm = ChatOpenAI(temperature=0)# ----------------------# 输出结构(Guardrails)# ----------------------class OutputSchema(BaseModel):decision: str = Field(..., description="最终决策")reason: str = Field(..., description="原因说明")# ----------------------# LLM 生成# ----------------------def generate(state):structured_llm = llm.with_structured_output(OutputSchema)result = structured_llm.invoke(f"根据以下内容做决策:{state['input']}")return {"draft": result}# ----------------------# Human Approval 节点# ----------------------def human_approval(state):print("\n====== 人工审核 ======")print("模型输出:", state["draft"])# 👉 实际生产:这里会是 API / 前端 UIuser_input = input("是否批准?(yes/no): ")return {"approved": user_input.strip().lower() == "yes"}# ----------------------# 最终执行# ----------------------def finalize(state):if state["approved"]:return {"result": state["draft"]}else:return {"result": "已拒绝执行"}# ----------------------# Graph# ----------------------builder = StateGraph(dict)builder.add_node("generate", generate)builder.add_node("human_approval", human_approval)builder.add_node("finalize", finalize)builder.set_entry_point("generate")# 中断点(关键!)builder.add_edge("generate", "human_approval")builder.add_edge("human_approval", "finalize")builder.add_edge("finalize", END)# Checkpoint(支持恢复)checkpointer = MemorySaver()graph = builder.compile(checkpointer=checkpointer)# ----------------------# 执行# ----------------------config = {"configurable": {"thread_id": "demo-user"}}result = graph.invoke({"input": "是否批准向用户发送营销邮件?"},config=config)print("\n最终结果:", result["result"])